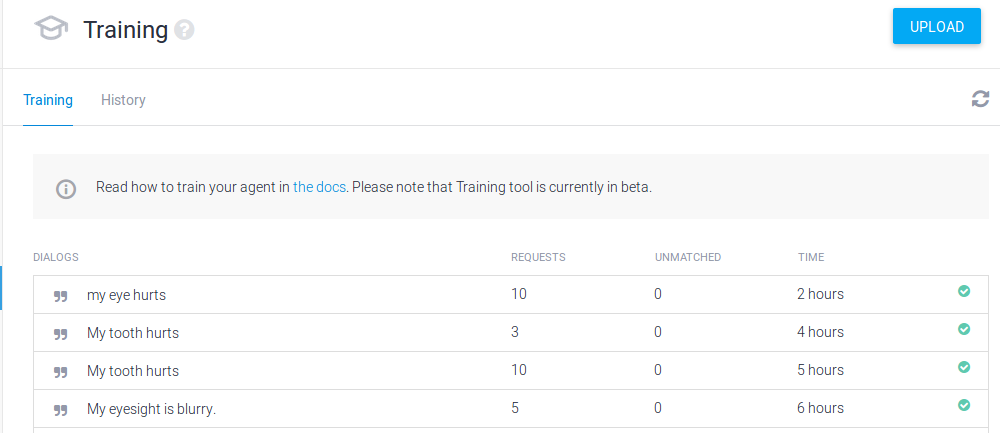

As Tuesday drew to a close, Wilbur had fielded 28 queries/complaints (including a few from me).

I classified 22 of these as health-related complaints, and of those, Wilbur was able to recognize only 6.

Context-related issues

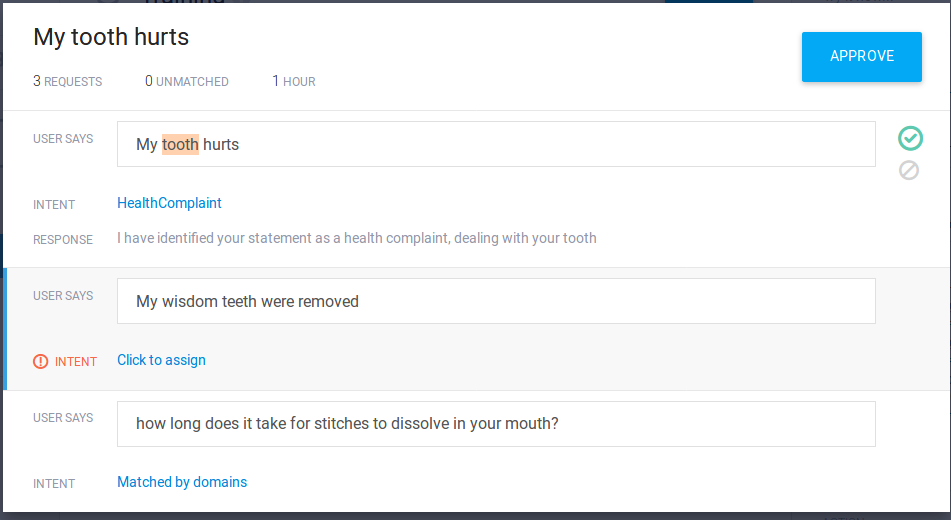

I came across some interesting issues in my analysis of Tuesday’s results. In the series of related statements shown in the figure below, Wilbur is not able to remember the context of the conversation. The first statement was recognized by Wilbur and he even recognized the nature of the problem. The second statement is clearly (to us) related to the first statement – even though it’s not really a complaint but more of an explanatory statement – but Wilbur had no idea of what to do with it. The third statement is clearly a follow up to the first two, but it doesn’t match anything Wilbur has seen before as a health complaint (partly because it isn’t a health complaint), and thus he had to rely on some of his default/fall-back intents to come up with an answer.

On a related note, I came across a context issue in my own interactions with Wilbur yesterday. After making a couple of random complaints (which were recognized), I said “I’m feeling good.” which was recognized as a health complaint. So I tried to reassure Wilbur that “I’m feeling really good, never better”, which seemed to confuse Wilbur (“I really can’t help you with never better. I’m just supposed to focus is on this site.”). After that, when I repeated that I felt “really good”, Wilbur seemed to switch to a different intent and congratulated me. After that, repeating “I’m feeling good” resulted in more congratulations and good wishes from Wilbur.

In this case, Wilbur got a little stuck in one way of looking at things (ie. health complaints), but once he was forced to break out of that context, he switched to another and answered the exact same statement in a completely different way.

In the case of the Health Complaint intent, I had not formally set a context, so each statement is not seen as linked to the previous one and is more likely to be processed as a completely independent event. This was partly because I just wanted to see if Wilbur could be trained to recognize simple one-sentence complaints. Setting a context for an intent is more useful when trying to build a bot/assistant that can handle a whole conversation (a more complex task).

To see Wilbur use context, try asking him to help you find an assistant. In this case, he is programmed to carry on a short conversation with you to collect some information. At the end, he will tell you that he’ll look into it for you, but don’t hold your breath – I didn’t have time to figure out how to link Wilbur with the back-end processing program I was writing to handle that part.